Your SAT Vendor Says Training Works. Can You Prove It?

Completion rates and phishing click rates do not prove behavior changed. Impact Proof tracks what employees actually do before, during, and after any intervention.

December. Your organization just finished its annual Security Awareness Training. The numbers look great: 95% completion rate. Average satisfaction score of 4.6 out of 5. The vendor sends you a polished report showing how well the program was received.

You forward the results to leadership. The CFO approves the renewal. Everyone agrees the training was a success.

Here is the question nobody asked: did employees actually change how they behave?

The completion rate tells you people finished the training. The satisfaction score tells you they did not dislike it. Neither tells you whether a single person handles email differently, authenticates more carefully, or shares data more responsibly than they did before the training started.

Did they love the training? Or did they just love that it was over?

You do not know. The vendor does not know either. Nobody has been measuring the thing that actually matters.

Completion Rates Are Not Evidence of Change

The Security Awareness Training industry has operated on a particular assumption for two decades: if people complete training, they become more secure. The metrics that vendors report — completion rates, phishing simulation click rates, quiz scores — all reinforce this assumption by measuring activity.

Activity is not behavior.

A 95% completion rate means 95% of employees clicked through the modules. It does not mean any of them changed how they operate. A 12% phishing click rate means 12% of employees clicked a simulated phishing email. It does not tell you whether the 88% who did not click are actually behaving more securely in their day-to-day work, or whether they simply recognized the simulation format.

This is not a criticism of training itself. Training can be valuable. The problem is the measurement gap — the complete absence of tools that tell you whether training (or any other intervention) actually produced a behavioral change.

Praxis Navigator does not compete with SAT platforms. It does not deliver training. It does not run phishing simulations. It measures whether those tools are working. Think of it as the independent verification layer that the industry has been missing.

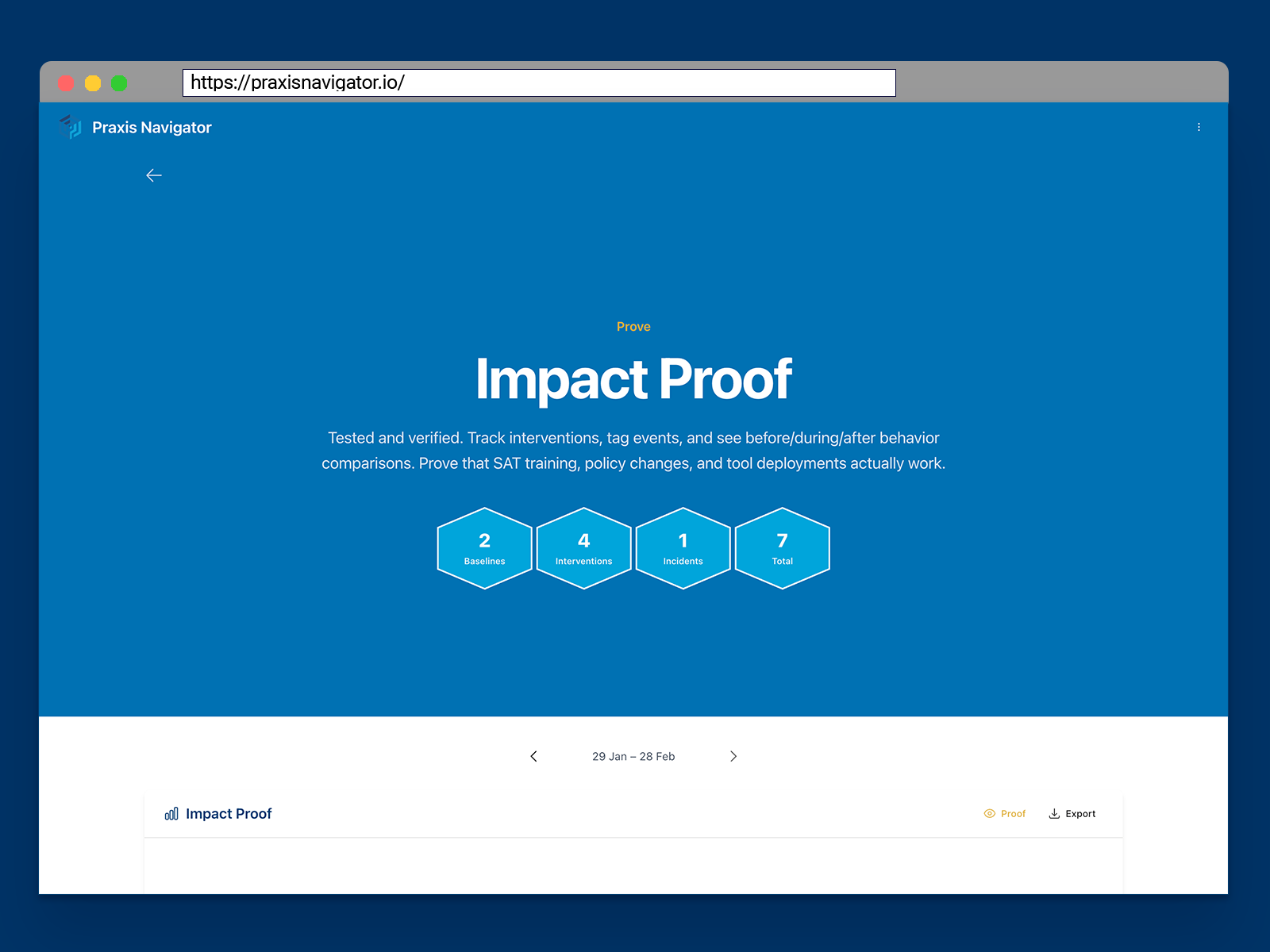

Impact Proof: Before, During, and After — Automatically

Praxis Navigator Impact Proof is the module that closes the measurement loop. It works by tracking behavioral data around any intervention you choose to tag — training programs, policy changes, tool deployments, awareness campaigns — and automatically generating before, during, and after comparisons.

The process is straightforward. You tag an event in the timeline — “SAT training completed December 15” or “new email policy enforced March 1.” Impact Proof then uses the baselines established by Risk Bearing to compare employee behaviors in the period before the intervention, during the transition, and in the weeks and months that follow.

The result is clear evidence of three things: immediate impact (did behaviors change right after?), short-term retention (did the change hold over the following weeks?), and long-term effect (did behaviors permanently shift, or did they revert to baseline?).

This is not a self-reported survey. It is not an opinion poll. It is observed behavioral data from your own Microsoft 365 environment, compared against your own internal baselines. The evidence either shows a change or it does not.

The Baseline → Intervention → Compare → Prove Loop

Impact Proof does not work in isolation. It depends on the measurement infrastructure built by the other modules in Praxis Navigator.

Employee Pulse provides the behavioral data — the raw signal of what employees are actually doing across the organization. Risk Bearing establishes the rolling internal baselines that serve as the “before” in any before-and-after comparison. Without reliable baselines, there is no reference point. Without behavioral data, there is nothing to compare.

Impact Proof sits on top of both and completes the loop:

Baseline — Risk Bearing establishes what normal behavior looks like, at every level and timeframe.

Intervention — Something changes. Training, a new policy, a tool deployment, an awareness campaign.

Compare — Impact Proof automatically compares behaviors before and after the intervention.

Prove — The evidence shows whether the intervention worked, how strongly, and how long the effect lasted.

This loop is what no other tool in the market currently provides. SAT vendors can tell you people completed training. Phishing simulation vendors can tell you who clicked. Nobody else can tell you whether actual day-to-day behaviors changed as a result.

Two Trainings, Two Very Different Outcomes

Here is where this becomes concrete. A mid-sized production company runs two different SAT programs in the same year. Program A in March, Program B in September. Both achieve high completion rates. Both receive positive satisfaction scores from employees. On paper, both programs are equally successful.

Impact Proof tells a different story.

Program A shows a measurable shift in email forwarding behavior that persists for more than three months. Employees in the departments that completed the training handle external sharing differently — and the change holds through the quarterly baseline.

Program B shows an initial behavioral shift that lasts approximately 10 days. By the end of the second week, behaviors have reverted to pre-training baseline levels. The training was completed, enjoyed, and forgotten.

Without Impact Proof, both programs look identical in the vendor reports. The IT leader renews both contracts. The organization spends the same amount on a program that produced lasting change and a program that produced nothing measurable.

With Impact Proof, the IT leader walks into the budget meeting with evidence. Program A demonstrably changed behavior. Program B did not. The conversation shifts from “should we keep spending on training?” to “which training actually works, and how do we do more of it?”

That is the difference between activity reporting and behavioral evidence.

The Full Picture: From Visibility to Proof

This post is the fourth in our “Closing the Visibility Gap” series, and it completes the core measurement loop.

Employee Pulse gives you visibility — you can see what is happening right now. Risk Bearing gives you context — you can see whether things are improving or declining. Stakeholder Brief gives you communication — the right information reaches the right people in the right language. Impact Proof gives you evidence — you can prove what works and stop what does not.

Together, these four capabilities deliver what the industry has been promising for two decades without actually providing: a way to know whether your security investments are changing how people behave.

The final post in this series will step back and look at the full picture — the visibility gap in human security, what it costs, and how this measurement loop closes it. If you have been following along, that one will tie everything together.

See If Your Current Tools Are Actually Working

If you are renewing SAT contracts, approving security budgets, or reporting on training effectiveness, Impact Proof gives you the evidence to make those decisions based on data rather than vendor reports.

Not sure how to frame the financial case? The Praxis Navigator Security Behavior ROI Calculator generates a board-ready PDF report using IBM, Verizon DBIR, and BLS benchmark data in under two minutes — free, no email required.

Ready to measure your security culture?

Connect your Microsoft 365 and see months of employee security behavior data in 15 minutes. Free 30-day trial.

Start Free Trial